Watch The Video

Watch on YoutubeAbstract

The historical roots of the current-day tech sector is infected with eugenic ideals, misogyny, and fascism. It's not hard to trace a line from William Shockley, inventor of the transistor, to current powerhouses such as Peter Thiel, Sam Altman, Elon Musk, and others—each espousing subtle or not-so-subtle visions of a techno-utopian future devoid of "low IQ" citizens.

Whether purposefully or not (maybe a bit of both), technology has aided and abetted in the creation of an environment that favors the wealthy and privileged and preys on the disadvantaged.

Open software provides an avenue to tip the scales from a ruthless market toward an economy of empathy. We must emerge from the grasp of our troubled past, not by ignoring it, but by reckoning and repairing the broken pieces.

Background

In the book Palo Alto: A History of California, Capitalism, and The World by Malcolm Harris, he retells the history of Palo Alto and Silicon Valley through the lens of class, both in terms of struggle and exploitation.

He refers to the region as a "whiteness cartel" and backs that up from the vivid history of settlers murdering natives in droves to the effects that Capitalism has wrought on the region, and ultimately how it has shaken the world.

In spite of the forces laid out in the book, Harris does not succumb to a predetermined outlook.

“[O]nly by understanding how we’re made use of can we start to distinguish our selves from our situations,” he writes.

It is in this vein that I decided to write about this subject.

The state of technology (one could say as well in academia) is one that lacks diversity and opportunity for disenfranchised people. This is no accident. It is the result of a system that continues to celebrate and empower the same forces that have been dominant for over a century.

What is, or should be, our reaction to this?

Notes

This covers only a slice of the all-encompassing evidence that our technology leaders are continuing

I. Sama

Samasource, also known as Sama, is "a San Francisco-based firm that employs workers in the Global South to label data for Silicon Valley clients like Google, Meta and Microsoft."

That sentence alone should give you pause. Why is a San Francisco firm employing workers in the Global South?

OpenAI (a previous clients) claims that Sama workers contributed toward a tool to detect toxic content, which was eventually built into ChatGPT.

Employees of Sama, and other such companies, are tasked with the job of sanitizing data for large language models. Their average wage is less than $2/hour.

In an exclusive by Time magazine in 2024, workers described their experience:

One Sama worker tasked with reading and labeling text for OpenAI told TIME he suffered from recurring visions after reading a graphic description of a man having sex with a dog in the presence of a young child. “That was torture,” he said.

Another report at Coda Story by Erica Hellerstein describes the working conditions like this:

Kauna Malgwi was once a moderator with Sama in Nairobi. She was tasked with reviewing content on Facebook in her native language, Hausa. She recalled watching coworkers scream, faint and develop panic attacks on the office floor as images flashed across their screens.

And also...

A 28-year-old moderator named Johanna described a similar decline in her mental health after watching TikTok videos of rape, child sexual abuse, and even a woman ending her life in front of her own children.

As the appetite for data grows, the market for data workers also increases.

Two months ago The Bureau of Investigative Journalism highlighted the gig-work giant Appen, which recruits over a million people around the world to do data work, mostly in the Global South.

The report links this data work to secret US military projects.

Hear more about this on Mystery AI Hype Theater 3000.

How did we get even get here?

II. Stanford

The university's first president, David Starr Jordan, is deemed one of the most prominent eugenicists of the early 20th century.

Many eugenic researchers followed, including Lewis Terman, and Ellwood Cubberley.

Terman adapted and revised a French scale of intelligence and dubbed it the Stanford-Binet IQ test. Cubberley helped to popularize its use.

Ben Maldonado at The Standford Daily writes:

Beyond utilizing Terman’s IQ test, which was developed with the assumption that non-white races were inferior, Cubberley viewed certain races as fundamentally less intelligent with less desirable cultures.

Cubberley took inspiration from factories, and sought to emulate factory-techniques in the realm of education.

Ellwood Cubberley and his cult of efficiency transformed the way education functioned, emphasizing production, cost reduction and standardized intelligence all shaped by race and heredity. [Emphasis mine]

Decades later, in the 1970s, William Shockley—credited for bringing silicon to Silicon Valley—was brought to Stanford as a physicist and professor.

Per the Southern Poverty Law Center (SPLC):

Despite having no training whatsoever in genetics, biology or psychology, Shockley devoted the last decades of his life to a quixotic struggle to prove that black Americans were suffering from “dysgenesis,” or “retrogressive evolution,” and advocated replacing the welfare system with a “Voluntary Sterilization Bonus Plan,” which, as its name suggests, would pay low-IQ women to undergo sterilization.

In spite of having his theories widely condemned, he succeeded in rehabilitating a eugenic ideology to a more politically savvy generation of academic racists.

It was around this time that Charles Murray, co-author of The Bell Curve, argued that average IQ differences between racial/ethnic groups were at least partly genetic in origin.

Other contemporaries evolved eugenic ideologies—such as using genome sequencing to increase intelligence, or coupling school funding with standardized test performance, in programs like "No Child Left Behind."

Back at Stanford, there's also John McCarthy, another well-regarded careerist at the university, also lauded with honors and accolades.

He's also know for coining the term "Artificial Intelligence" as a clever way to ensure funding.

In 2004, he wrote a post on Usenet which you can still see today titled Technology and the Position of Women, in which he envisions "equality" for women.

He posits that, in the future, women would be empowered to follow a career, given self-driving cars could aid in chauffeuring kids to school, and household robots would take over household chores.

He also made some interesting distinctions between men and women:

The very highest level of potential in science and mathematics, which only one in a million men can attain, the fraction of women who can attain it may be biologically smaller. [Emphasis mine]

And when speaking of social movements that seek to correct occupational injustices through policies or quotas:

At present there are social movements and people with institutional power who regard there being fewer women than men at some level of some occupation as an injustice that must be corrected by quotas. This is a mistake and will not succeed because of differences in ability and motivations between males and females. [Emphasis mine]

Are these biases still present today?

A 2025 report from the US Bureau of Labor Statistics shows that in the professions listed as "computer programmer" or "software developer" only 20% or less identified as women. Less than 7% black, less than 10% Latino.

Certainly there are deeper, systemic issues in these figures, but again, these scenarios do not happen by accident.

So, my question at this point is—what kind of world are we building?

III. Utopia

A 1996 listserv email written by a 23-year-old graduate student at the London School of Economics is not coy in its blatant racism.

Blacks are more stupid than whites. I like that sentence and think it is true. But recently I have begun to believe that I won’t have much success with most people if I speak like that. They would think that I were [sic] a “racist”: that I disliked black people and thought that it is fair if blacks are treated badly. I don’t. It’s just that based on what I have read, I think it is probable that black people have a lower average IQ than mankind in general, and I think that IQ is highly correlated with what we normally mean by “smart” and stupid” [sic]. I may be wrong about the facts, but that is what the sentence means for me. For most people, however, the sentence seems to be synonymous with: I hate those bloody [the N-word, included in the original email, has been redacted]!!!!

That was sent by an Oxford University philosopher who's been profiled by The New Yorker and has become highly influential in Silicon Valley.

His name is Nick Bostrom.

When Bostrom was recently reminded of his email by philosopher Émile Torres, Bostrom did issue an apology. But subtle hints of eugenic-adjacent views persist.

I would leave to others [sic], who have more relevant knowledge, to debate whether or not in addition to environmental factors, epigenetic or genetic factors play any role. What about eugenics? Do I support eugenics? No, not as the term is commonly understood.

I must mention, however, that Oxford University did go on to honor the apology and endorsed his remorse.

However, as recently as 2017, Bostrom has written about how too many lower IQ people are having children, and has previously argued that IQ gains could be made by genetically altering embryos, straddling bioethical lines—favoring "enhancements" over "therapies."

Bostrom is a key figure in Longtermism, which "posits that one’s highest ethical duty in the present is to increase the odds, however slightly, of humanity’s long-term survival and the colonization" of distant galaxies.

On paper, Longtermism emphasizes a type of utilitarian ethics, but in practice, it provides cover to billionaires and their twisted, xenophobic views.

Timnit Gebru, Founder and Executive Director of the DAIR Institute, along with Émile Torres, who I mentioned earlier, coauthored The TESCREAL bundle: Eugenics and the promise of utopia through artificial intelligence.

In the paper, they highlight Bostrom's vision of the future in which:

[He] not only imagined a utopian future enabled by radical human enhancement, but noted that if humanity colonizes the universe and creates planet-sized computers to run virtual-reality worlds populated by digital people, the future posthuman population could be enormous.

This view of the future was further expounded upon by William MacAskill in his book, What We Owe The Future.

MacAskill, it should be noted, is cofounder of the Effective Altruist movement, a parallel belief system to Longtermism.

In the book, MacAskill argues that technological progress opens up humanity to an existential threat, but he also assesses that "technological stagnation" is a terrifying prospect.

The marriage of this belief system and technology is not far off.

IV. Convergence

In 2012, MacAskill became the president of the Centre for Effective Altruism. It was housed in the same offices as Bostrom's Future of Humanity Institute.

They shared a philosophical language that now appeals to tech CEOs and VC elites.

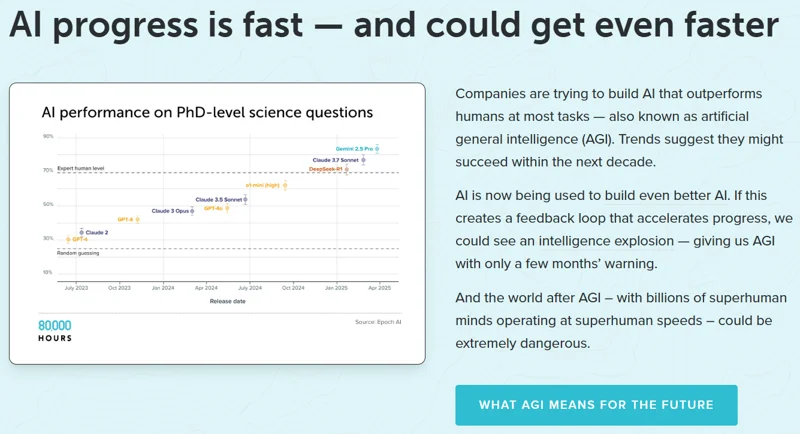

The organization 80,000 hours, founded in part by MacAskill, is a non-profit that, according to the website "provides research and support to help talented people move into careers that tackle the world's most pressing problems... your choice of career is the biggest ethical decision you'll ever make."

What are the top two, high-impact careers that fit that description?

- AI governance and policy

- AI safety technical research, otherwise known as AI alignment

From their own website, 80,000 Hours vigorously envisions a world in which artificial general intelligence (or AGI) routinely outperforms humans at most tasks.

And the world after AGI — with billions of superhuman minds operating at superhuman speeds — could be extremely dangerous.

This is the same language utilized by tech leaders and VC funders.

Elon Musk, in 2022, retweeted an announcement of MacAskill's book, What We Owe the Future, stating "Worth reading. This is a close match for my philosophy."

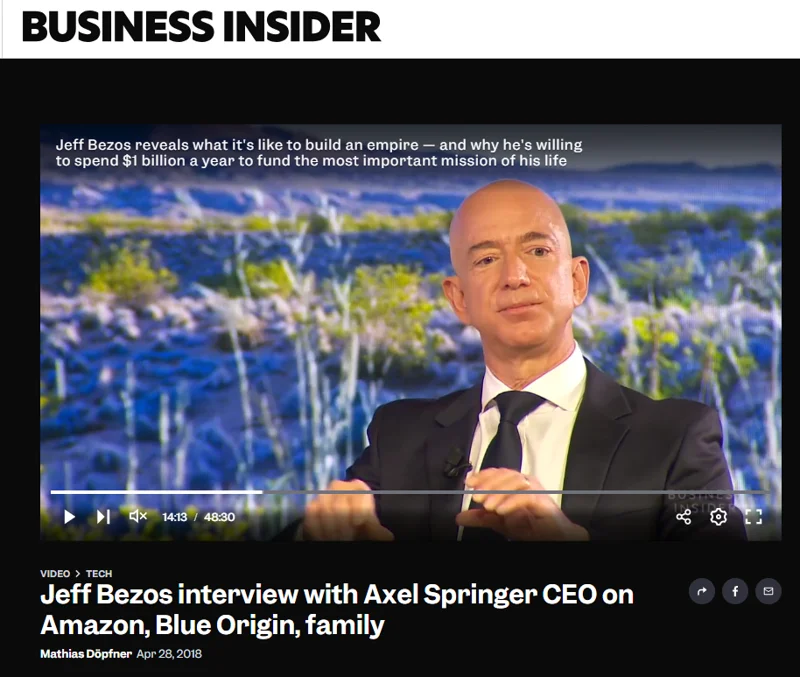

Jeff Bezos has made statements about breaking free from stasis and stagnation, waxing poetic about a solar system that could easily support a trillion humans.

I mentioned Timnit Gebru earlier. She was fired by Google in 2020 due to a research paper she authored on the dangers of LLMs.

Fired by the same Google that hired and has employed Ray Kurzweil since 2012. Known primarily for his book "The Singularity is Near", and in a 2009 documentary titled the Transcendent Man, he quips:

Does God exists? I would say, 'Not yet.'

Sam Altman, in response to the recent Molotov cocktail thrown at his house, responded on his blog, including the following:

Once you see AGI you can’t unsee it.

Dario Amodei, as recently as February of this year, during a podcast with Dwarkesh Patel, said that AGI is anywhere from one to three years away.

In 2024, he wrote a blog post titled "Machines of Loving Grace" in which he envisions super intelligence that is "smarter than a Nobel Prize winner."

This self replicating intelligence becomes autonomous, running millions of instances of itself. Effectively, he describes this phenomenon as a "country of geniuses in a datacenter."

More darkly, the funders of these technologies are fueled by sociopathic visions of the future.

Peter Thiel has been espousing apocalyptic visions, and he sees salvation in a transhumanist future enabled by artificial intelligence.

Power VC funder Marc Andreesen authored The Techno-Optimist Manifesto to paint a picture of a future he envisions.

We believe not growing is stagnation, which leads to zero-sum thinking, internal fighting, degradation, collapse, and ultimately death.

We believe intelligence is the ultimate engine of progress. Intelligence makes everything better.

We believe we are poised for an intelligence takeoff that will expand our capabilities to unimagined heights.

We believe Artificial Intelligence is our alchemy, our Philosopher’s Stone – we are literally making sand think.

We believe, as Richard Feynman said, “Science is the belief in the ignorance of experts.”

Feynman, it should be noted, is one of the 20th century's most famous and influential physicists, involved in the Manhattan project, and earning a Nobel Prize for his work in quantum physics.

But that's not all he's know for.

In the publication Big Think, Ethan Siegel looks closer into Feynman's autobiography and interviewed individuals who were in his circuit.

Above all, what Siegel discovered was Feynman's "disdain and disrespect for practically everyone else in the world besides himself."

Feynman’s sexism was so extreme that it triggered protests in California numerous times, and he felt no qualms about detailing his pick-up-artist moves in his autobiography, notably referring to his potential lovers as “bitches” when considering them.

His self-centered and misogynistic lifestyle are not unique among leaders and funders of the tech sector.

Take, for instance, what we've learned about the Epstein class. Peter Thiel is prominently featured in those files. So is Reid Hoffman, early investor into OpenAI, referenced as "a very close friend" by Epstein.

Epstein's relationship with academics and other powerful men has also been scrutinized, and the rampant sexism and misogyny is well documented.

Also, per The Guardian:

As late as 2018, Epstein was invited to or attended dinners alongside the likes of Elon Musk, Jeff Bezos, Google founders Larry Page and Sergey Brin, Twitter co-founder Evan Williams, Microsoft founder Bill Gates...

These individuals are frequently referred to as "very intelligent men" or "high IQ people."

Why is that exactly?

V. Intelligence

In 2023, Microsoft released a paper titled Sparks of Artificial General Intelligence: Early Experiments with GPT-4.

In order to assess whether GPT-4 was demonstrating intelligence, they originally referenced the work of Linda Gottfredson—also a eugenicist and white supremacist, arguing numerous times that most Black people have low IQ and are not employable.

Assessments of intelligence go back to the late 19th century, particularly through the works of Charles Spearman.

Spearman is credited with developing the idea of an intelligence G-Factor or general intelligence, a mathematical construct developed to categorize cognitive abilities and human intelligence.

As a corollary, the entire field of statistics and by extension, data science, has origins in eugenics.

A paper published in 2025 titled Dismantling the Logics of Eugenics Via Emancipatory Data Science Education details some of this history.

The US Eugenics Office and the American Eugenics Society, both founded in the early 20th century, coincided with legalized mass sterilization efforts in parts of Europe AND some US states.

The paper references Francis Galton, Karl Pearson, and Ronald Fisher, whom they denote as the founding fathers of statistics.

Statistics, driven by the political agenda of its founders, was the primary tool used to advance the eugenic discipline.

Today's algorithms use many of those same methodologies.

As an example, later in the paper:

Consider the case of simple linear regression. The goal of the model is to determine one equation that represents the majority of data points on a two-dimensional plane.... As people, we are not the same, and achieving sameness is not necessarily a desirable goal.

Well, unless, of course, one of your goals is eugenics.

Although there are ways to cover for statistical variances:

This siloing of experiences, combined with unilateral decision-making authority, leads to inconsistent data practices. It perpetuates unaccountable bad behaviors and codifies harm to people in vulnerable communities. [Emphasis mine]

This practice has been plainly illustrated in multiple academic papers, and perhaps most famously, in the 2016 book "Weapons of Math Destruction" by Cathy O'Neil.

She highlights algorithms responsible for hiring decisions, insurance claims, educational placement, law enforcement actions, and more—all reinforcing and perpetuating bias and inequality.

Tech companies would have us believe that AI safety teams are adding safeguards to protect users.

These safety teams seek technical approaches to questions of human values that are philosophical, cultural, or epistemological, per the Open Encyclopedia of Cognitive Science.

Consider this Ars Technica headline from a few months ago: "AI medical tools found to downplay symptoms of women, ethnic minorities".

Or this, ironically from the Stanford Report, "Researchers uncover AI bias against older working women".

A report by Cell Reports Medicine shows that AI-enhanced pathology frequently exhibits biases against underrepresented populations.

Even more sinister, the autonomous city of Prospera ZEDE in Honduras—a Thiel and Andreesen backed project—a zone with its own political, judicial, and economic system, that sidesteps Honduran law.

The zone has already welcomed transhumanists who are testing genetic enhancements, intent on building AI-assisted superhumans.

These zones are built for the wealthy, and prey on existing disenfranchised communities.

Again from the Open Encyclopedia of Cognitive Science:

Eugenic thinking fundamentally involves identifying eugenic traits and distinguishing between “more suitable” and “less suitable” kinds of people.

And later:

This link between humanity’s future and improving, augmenting, or replacing human intelligence with artificial general intelligence systems should at least raise questions about the ways in which science and politics are entwined in this ongoing intrigue with artificial general intelligence.

The dehumanization of data workers, who are erased from the conversation of artificial intelligence, is just one example of how this technology enacts eugenic thinking in real time.

VI. Technology

Now it's come full circle.

What is our role, as software engineers, as we reckon with these massive imbalances perpetuated by our industry?

So far I've made the case that the tech oligarchy is run by individuals who have demonstrated a contempt for women, people of color, and other marginalized groups.

I have provided evidence that links these leaders to overt eugenic ideals, all perversely propagated over the last century, in academia and the tech sector.

These ideals have morphed into a forward-looking belief system of transhumanism and techno-optimism that preys on marginalized groups.

Without actively recognizing this as a threat, both to our immediate community, as well as to the future of humanity, then there's not much to do than shrug your shoulders and hope things get better on their own.

But if instead, like me—you're outraged by the effects of a perverse power structure, built on century-old systems that are culling our societies AND immediate communities through misogyny and xenophobia—then we must act.

Help is not coming our way, and things will not get better on their own.

The tech lords seek to reduce human agency and restrict choice. Open software can provide choice and empower human agency.

But it must be built with empathy and compassion toward users.

Empathy inverts the current order. It is a key ingredient in mitigating bias, exploitation, and systemic racism and misogyny.

The goal here is not to shame you for using these LLM apparatuses. It is not to provide you with flawless logic, or to convince you take an absolutist position.

Instead, it is a plea to take a proactive position, that leans heavily in favor of people who also happen to not be billionaires.

VII. Tools

In order to be proactive, there are a few tools that can be helpful in framing our mindset.

Conceptual Clarity

The first of these is Conceptual Clarity.

I borrow this term from an excellent paper by Olivia Guest, Marcela Suarez, and Iris van Rooij titled "Toward Critical Artificial Intelligence Literacies".

I'm only highlighting the first of five concepts in their paper, but I highly recommend you check out the rest.

Of their collection of concepts, they write:

[This collection] rejects dominant frames presented by the technology industry, by naive computationalism, and by dehumanising ideologies.

In other words, we shouldn't allow the tech companies to define how we frame our ideas around this technology.

For example, it is extremely difficult to have competent or productive conversations about harm reduction within the ambiguous, terminological disarray of the term "artificial intelligence."

How do we discuss the issue of data work? Is this something that should be discussed within the realm of technology?

If so, how? If not, why not?

Or, why are users turning to chatbots as a way to cope with loneliness? What are some of the gaps in current technology that have led to this? Is this a problem that technology can or should solve? If so, how? If not, why not?

Interdisciplinary Collaboration

To aid conceptual clarity, I'll point you to the second tool: Interdisciplinary Collaboration.

I've tried to frame my thinking around expert voices that are both within and outside of the technology sphere. At the end of this post, I've condensed my Works Cited, plus Additional References that didn't quite make it to my original presentation.

Why is this important?

Well, why should our conversation about technology start and end with billionaires who mostly look the same and are motivated by similar ideals?

If we want to build with empathy, we must be willing to listen.

And to who?

Listen to the very same people that eugenicists hate. Listen to people of color, women, and to first-person accounts of the disenfranchised.

Accept the wisdom of experts in adjacent fields—such as psychologists, sociologists, artists, philosophers, educators, historians, linguists, and so on.

Perhaps then we will learn how to build tools and software that actually truly fulfill the needs of our users.

Persistent Resistance

And lastly—Persistent Resistance.

We can't just wait for things to get better on their own.

Oppression doesn't work that way.

We must be persistent in our denunciation of racism and misogyny, and the institutionalized instruments that normalize them. And we must attempt to disengage from the systems of oppression.

But this is not done by winning arguments on the internet, or shaming others who have broadly embraced the technology.

Research shows that this tactic is self defeating.

Instead, we must also engage with compassion, recognizing that certain paradigms are quite complex, and there aren't always easy answers.

For example, educational institutions that proctor standardized tests are also falling prey to historically racist practices. That doesn't necessarily mean that educators are acting in bad faith.

What I am saying is: Vehemently oppose systems of oppression, while maintaining empathy for people.

Again, open software can be a spoke to the wheel of these systems. It is a viable form of resistance.

These tech companies are powerful, dominant, and deplorable.

But they are vulnerable. They are vulnerable to people who care... and paradoxically, to those who care about others more than they care for themselves.

Let's look at this quote from The Stanford Daily again:

Cubberley and his cult of efficiency transformed the way education functioned, emphasizing production, cost reduction and standardized intelligence all shaped by race and heredity.

Productivity. Efficiency. Intelligence.

These terms are ripped right out of the marketing coming out of the big tech companies.

Under their economy, a software engineer might see a problem that STARTS with a VC cash infusion and an imagined solution for that problem.

Under an economy of empathy, a software empath sees a problem that starts with the affected population communicating their needs, which leads to a practical solution.

With conceptual clarity, interdisciplinary collaboration, and persistent resistance, lets build software that makes our world better.

Works Cited

Billy Perrigo, "Exclusive: OpenAI Used Kenyan Workers on Less Than $2 Per Hour to Make ChatGPT Less Toxic", TIME, January 18, 2023.

Erica Hellerstein, "Silicon Savanna: The workers taking on Africa’s digital sweatshops", Coda Story, October 11, 2023.

Ben Maldonado, "Eugenics on the Farm", The Stanford Daily, October 7, 2019.

Ben Maldonado, "Eugenics on the Farm: Ellwood Cubberley", The Stanford Daily, February 4, 2020.

"William Shockley", Southern Poverty Law Center. Retrieved March 29, 2026.

Yarden Katz, "Intelligence Under Racial Capitalism: From Eugenics to Standardized Testing and Online Learning", Monthly Review, Vol. 74, No. 04, September 2022.

John McCarthy, "Technology and the Position of Women", https://www-formal.stanford.edu/jmc/future/women.html, retrieved April 17, 2026.

"Labor Force Statistics from the Current Population Survey", U.S. Bureau of Labor Statistics. Retrieved April 2, 2026.

Nick Bostrom, "Apology for an Old Email", Personal blog, https://nickbostrom.com/oldemail.pdf, January 9, 2023.

Andrew Anthony, "‘Eugenics on steroids’: the toxic and contested legacy of Oxford’s Future of Humanity Institute", The Guardian, April 28, 2024.

Timnit Gebru and Émile P. Torres, "The TESCREAL bundle: Eugenics and the promise of utopia through artificial general intelligence", First Monday, Vol. 29, No. 4, April 1, 2024.

Alexander Zaitchik, "The Heavy Price of Longtermism", The New Republic, October 24, 2022.

"How to use your career to reduce risks from AI", 80,000 Hours. https://80000hours.org/ai/. Retrieved March 29, 2026.

Mathias Döpfner, "Jeff Bezos interview with Axel Springer CEO on Amazon, Blue Origin, family", Business Insider, April 28, 2018.

Karen Hao. "We read the paper that forced Timnit Gebru out of Google. Here’s what it says.", MIT Technology Review, December 4, 2024.

John Rennie, "The Immortal Ambitions of Ray Kurzweil: A Review of Transcendent Man", Scientific American, February 18, 2011.

Sam Altman. "-". Personal Blog. April 10, 2026.

Dario Amodei. "Machines of Loving Grace". Personal Blog. October 2024.

Laura Bullard, "The Real Stakes, and Real Story, of Peter Thiel’s Antichrist Obsession", September 30, 2025.

Marc Andreesen, "The Techno-Optimist Manifesto", Andreesen Horowitz, October 16, 2023.

Ethan Siegel, "Ask Ethan: Why isn’t Richard Feynman your personal hero?", Big Think, November 1, 2024.

"Zuckerberg, Hoffman, and Thiel Named in Epstein’s “Wild Dinner” Email", Moms Justice Alerts, February 2, 2026.

Rebecca Watson, "Epstein Files Reveal How Pathetic Richard Dawkins & Other Men Are", skepchick, February 5, 2026.

Jason Wilson, "‘The smart, the rich, the powerful’: Epstein associated with Silicon Valley elite years after his release from prison", The Guardian, February 3, 2026.

"Linda Gottfredson", Southern Poverty Law Center. Retrieved March 29, 2026.

Sébastien Bubeck et al., "Sparks of Artificial General Intelligence: Early experiments with GPT-4 (version 1)", arXiv:2303.12712v1, March 22, 2023.

Thema Monroe-White, C. Malik Boykin, and JaNiya Daniels, "Dismantling the Logics of Eugenics Via Emancipatory Data Science Education", IASE Conference Proceedings Series, March 16, 2025.

Abeba Birhane. "Algorithmic Bias", Open Encyclopedia of Cognitive Science, March 16, 2026.

Melissa Heikkilä, "AI medical tools found to downplay symptoms of women, ethnic minorities", Ars Technica, September 19, 2025.

Hope Reese. "Researchers uncover AI bias against older working women", Stanford Report, October 17, 2025.

Shih-Yen Lin et al., "Contrastive learning enhances fairness in pathology artificial intelligence systems", Cell Reports Medicine, Vol. 6, Issue 12, December 16, 2025.

Mario Munoz, "Honduras, Immigration, and Technology", Python By Night, March 3, 2026.

Robert A. Wilson. "Eugenic Thinking and the Cognitive Sciences", Open Encyclopedia of Cognitive Science, July 24, 2024.

Richard Kirk, "Intervention — 'For a Political Geography of Artificial Intelligence: Fighting Ghost Work, Exploitation, and the Making of a Global Digital Underclass'", Antipode Online, July 30, 2025.

Olivia Guest, Marcela Suarez, and Iris van Rooij. "Towards Critical Artificial Intelligence Literacies", Zenodo, December 2, 2025.

Additional References

There are an abundance of resources that contain way more wisdom than can be contained in this one post.

Here are some more scattered references that informed my presentation, either directly or indirectly, but were not referenced in the actual talk.

Articles

"Why Silicon Valley is bringing eugenics back" - Also makes some of the same connections presented above

"Eugenics in the Twenty-First Century: New Names, Old Ideas" - More from Émile P. Torres, co-author of The TESCREAL Bundle

"Trump Soaks Up Praise from World's Biggest Tech CEOs at White House Dinner: See What Each of Them Said" - Absurdity

"Bizarre and Dangerous Utopian Ideology Has Quietly Taken Hold of Tech World" - Podcast episode also featuring Émile P. Torres. Includes the following quote:

If you look in the TESCREAL literature, you will find virtually zero reference to ideas about what the future ought to look like from non-Western perspectives, such as Indigenous, Muslim, Afro-futurism, feminist, disability rights, queerness, and so on, these perspectives.

"The janitors of the internet" - stories of workers who prop up "artificial intelligence" through their data work. Includes this quote:

We say: ‘The model learned’ while the actual humans doing the teaching remain invisible, unnamed, underpaid. This is not accidental language. It lets companies claim revolutionary breakthroughs while hiding an exploitative labour model. It lets us marvel at artificial intelligence while ignoring the very real, very human intelligence of workers who make these systems function.

"Biased bots: Human prejudices sneak into AI systems" - from 2017, but shows that even back then, biased data affected ML and early AI systems (it still does). From the article:

"Questions about fairness and bias in machine learning are tremendously important for our society," said Arvind Narayanan, assistant professor of computer science and member of the Center for Information Technology Policy at Princeton University. "We have a situation where these artificial intelligence systems may be perpetuating historical patterns of bias that we might find socially unacceptable and which we might be trying to move away from."

...

"The biases that we studied in the paper are easy to overlook when designers are creating systems," said Narayanan. "The biases and stereotypes in our society reflected in our language are complex and longstanding. Rather than trying to sanitize or eliminate them, we should treat biases as part of the language and establish an explicit way in machine learning of determining what we consider acceptable and unacceptable."

"Adults Lose Skills to AI. Children Never Build Them." - Psychology Today article about cognitive effects on adults vs children. From the article:

The model's statistical biases become the student's default framing. The model's reasoning structure becomes the student's reasoning structure. LLMs homogenize not just language but also perspective and reasoning strategies. The convergence tracks toward Western, educated, mainstream norms because that's what dominates training data and gets reinforced through alignment (Sourati et al., 2026).

"What Can Psychology Teach Us About AI’s Bias and Misinformation Problem?" - Research out of Berkeley from researcher Celeste Kidd.

"How AI can distort human beliefs" - Referenced in previous link. Contains:

Overhyped, unrealistic, and exaggerated capabilities permeate how generative AI models are presented, which contributes to the popular misconception that these models exceed human-level reasoning and exacerbates the risk of transmission of false information and negative stereotypes to people.

Social Media

https://mastodon.social/@APC/116447899546251592

https://denotation.link/@minimalparts/116379970497934325

https://mastodon.social/@jaystephens/116159635751248113

https://scholar.social/@olivia/116248763817728659

https://fire.asta.lgbt/notes/aj331i64th3m026q

Preprints / Papers

- Interactional foundations for critical AI literacies - Mark Dingemanse

- The homogenizing effect of large language models on human expression and thought - Zhivar Sourati, Alireza S. Ziabari, Morteza Dehghani

- Pygmalion Displacement: When Humanising AI Dehumanises Women - Lelia Erscoi, Annelies Kleinherenbrink, Olivia Guest

- How LLMs Distort Our Written Language - Marwa Abdulhai, Isadora White, Yanming Wan, Ibrahim Qureshi, Joel Z. Leibo, Max Kleiman-Weiner, Natasha Jaques

- Barriers to Equality in Academia - Prepared by female graduate students and research staff in the Laboratory for Computer Science and the Artificial Intelligence Laboratory at M.I.T. (This is from 1983!)

Podcasts

- The Data Fix - Recent episodes 76, 78, 79 are especially prescient.

- Mystery AI Hype Theater 3000 - By Emily Bender and Alex Hanna. Episode 69 focuses on AGI and Eugenics. Episode 75 references Appen, referenced in my talk.

- AI Skeptics with Cathy O'Neill, author of Weapons of Math Destruction. Highly recommend episode from March 16 with Celeste Kidd.

- Dreaming Against the Machine New podcast just launched by Adam Becker, author of "More, Everything, Forever".

Documentary

Ghost in the Machine by Valerie Veatch. Much of what is covered in this talk is in this documentary as well. I reviewed it here.