Using PDM for Your Next Python Project

Packaging, Dependencies, PEPs, Oh My!

August 11, 2022

Using PDM for Your Next Python Project

Packaging, Dependencies, PEPs, Oh My!

I decided to dive into a topic perhaps all too complex for me at the moment, but I couldn't help myself. With my ever increasing number of side-projects, I've been testing some deployment and build strategies. This has led me down the dark path of Python dependency management.

What is a Dependency?

When you build your Python application, you will most likely "depend" on certain libraries to get the job done.

If you're building a web scraper, you may need to use beautifulsoup4. If you're working with excel, you may be using openpyxl, or if you're building a web app, maybe you're using fastapi.

You usually install these dependencies with pip. Something like:

pip install <package-name>

What you'll soon note is that those dependencies also have dependencies of their own. Sometimes it can seem like it's turtles all the way down!

Keeping Track

You may want to keep tabs on what dependencies you are using in your project. This is often done by creating a requirements.txt file. That might look something like:

fastapi==0.78.0

sqlmodel==0.0.6

uvicorn[standard]==0.18.2

pydantic[dotenv]

Without getting lost in the weeds, suffice it to say that using requirements.txt as your sole dependency solution can become problematic down the road, particularly with applications you are maintaining, supporting, sharing, etc...

Note: Inversely, there are also plenty of times where using a

requirements.txtfile is perfectly fine, particularly in solo projects or other simple applications. This post is not about those times.

Why PDM?

As I've been creating an infinite number of side-projects and deploying them on free-tier accounts, I've been more and more interested in tools that offer dependency management.

As a side-effect of this curiosity, I started learning more about packaging in general, as well as the world of Python build tools. Yikes!

There are quite a few of them out there (see this awesome list by Chad Smith) that try to accomplish similar things, and that can seem daunting.

I was initially intrigued with PDM after perusing the documentation and reading a blog post by Frost Ming, the library's creator.

Although poetry seems to be a far more popular tool accomplishing a similar task, I thought to give PDM a try.

PEP 582?

Before I get into the details of PDM, there is a particular issue with Python development that remains a hurdle, particularly for newer developers.

When I install a package with pip, where does it go?

A lot of tutorials or guides will suggest a specific workflow when starting a new project. More specifically, creating a virtual environment.

But why?

Mostly, you want to isolate the dependencies relevant to your specific project. Once you start having a zillion side-projects (like me), you want them to be able to co-exist without mucking up anything else on your system.

Virtual environments solve for this by isolating your Python interpreter, as well as the dependencies that you are installing on a per project basis.

A few years ago, PEP 582 was proposed in order to address this Python quirk (namely, the need to create/activate virtual environment).

The idea was for Python to recognize a __packages__ directory within your project, and prefer using this directory to import packages, as opposed to local/global packages contained in other locations.

Phew! That's a mouthful.

Visually, your project structure might look like:

project_root/

┣ __pypackages__ # PEP 582!

┣ app/ # Your application

┃ ┣ main.py

┃ ┗ __init__.py

┗ README.md

Without getting into the technical details, your Python interpreter would know where to find your dependencies for your specific project based on the existence of the __pypackages__ directory.

You can read more about PEP 582 if you're curious, but some of you may be wondering why I even went down that road to begin with.

Finally, PDM

If you have read anything on this blog, you may get the sense that it usually takes me a long time to finally get to it. This is no different. (Sorry!)

But the reason I wanted to talk about PEP 582 in the first place is because when I first started using PDM, it was prior to the 2.0 release.

Previous to that, PDM used PEP 582 by default. In other words, it was an implementation of how one could go about using a __pypackages__ directory, per the PEP guidance.

However, since then, version 2.0 was released, Frost Ming wrote on his blog that PDM support for PEP 582 would be opt-in going forward.

When PDM was first created, it was advertised as a package manager supporting the PEP 582 package structure. However, after two years of waiting, the PEP 582 is still in Draft status and has been slow to progress... Now I hope that PDM is not only a project of personal interest, but also a package manager that supports the latest Python packaging standards and aims at general developers. So in 2.0, we made virtualenv the default setup for the project.

I have tested both versions (pre and post 2.0), and I must say, I'm a fan of the PEP 582 implementation. Your mileage may vary, but if you have time to spare, I'd give it a whirl.

With that said, the following also does not conform to PEP 582, choosing the more common virtualenv method.

Starting A Project

To be honest, PDM has pretty great documentation, so I'm not going to retread much of what's already been done.

While I have not exhausted much of the features, I have enjoyed the simplicity of getting started and replicating a build as part of a deploy on platform.sh.

Now, to use PDM, you have to have it installed in your system. I chose to install it with pipx. (I may have to do another writeup on pipx, come to think about it. You'll need to have pipx installed too—turtles all the way down.)

pipx install pdm

Then, when starting a new project, create your new directory:

mkdir pdm-demo

cd pdm-demo

After that, you just need a simple command:

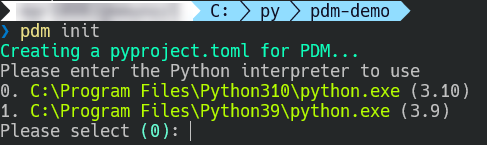

pdm init

What happens here is pretty awesome, really. PDM finds the versions of Python that are already installed in your system.

Previously, PDM would default to using PEP 582 __pypackages__ as explained above, but as of 2.0, it now asks if you would like to create a virtualenv with your chosen interpreter.

This creates a .venv directory in your current directory.

The CLI guides you through a few more questions. It has sensible defaults, so you can just zoom through them.

At the conclusion, PDM also generates a pyproject.toml file that conforms to PEP 517 and PEP 621.

Err, what? What is a pyproject.toml file?

More turtles.

Here is an article by Brett Cannon if you want to know more about what any of this means.

Anyway, here's what the pyproject.toml file might look like:

[project]

name = ""

version = ""

description = ""

authors = [

{name = "Mario Munoz", email = "pythonbynight@gmail.com"},

]

requires-python = ">=3.10"

license = {text = "MIT"}

[build-system]

requires = ["pdm-pep517>=1.0.0"]

build-backend = "pdm.pep517.api"

Dependencies

That's all well and good, but I started this writeup talking about dependencies. What happens next?

Going forward, instead of using pip as your package manager, you can now use PDM to add any libraries your project will depend on. For example, if I want to start a FastAPI project, I'll just do this:

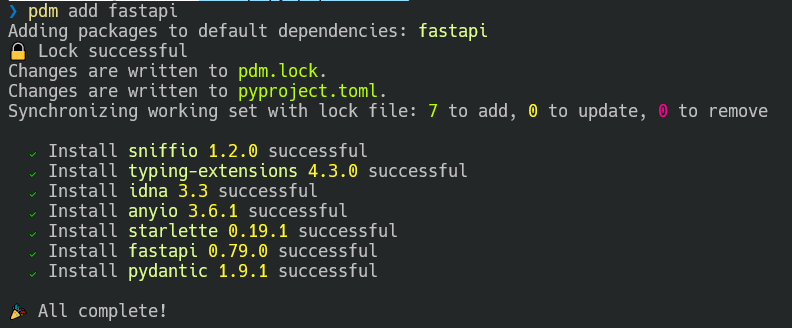

pdm add fastapi

The result ends up looking something like this:

You'll notice that a few things are happening.

The first is "Changes are written to pdm.lock". This lock file contains the dependencies for FastAPI. In short, it is sort of like a "requirements.txt" file, specifically for your own project. It contains additional metadata, and it is meant to prevent dependency conflicts in the future.

Next, you'll see "Changes are written to pyproject.toml", which means that your dependency is now stored in that file.

Now it looks like this:

[project]

name = ""

version = ""

description = ""

authors = [

{name = "Mario Munoz", email = "pythonbynight@gmail.com"},

]

dependencies = [

"fastapi>=0.79.0",

]

requires-python = ">=3.10"

license = {text = "MIT"}

[build-system]

requires = ["pdm-pep517>=1.0.0"]

build-backend = "pdm.pep517.api"

[tool]

[tool.pdm]

You now see that your dependency on fastapi has been added to your pyproject.toml file.

By this point, you should be well on your way to working on your project, and you won't have to worry about creating/activating your virtual environment.

And So On

This only scratches the surface. PDM allows for a whole lot more, such as adding development-only dependencies, local dependencies, specifying alternate lockfiles to use, adding your own scripts/hooks, and much more.

When replicating your project, you can use pdm install to install the packages in the lock file.

That's right, you won't need a requirements.txt at all. Of course, you can still generate one if you wish, but if you're already using PDM, you won't really need to.

What's also great about PDM is that by conforming to PEP 621, you can use other backends like flit-core, hatchling, and setuptools interchangeably.

You could use PDM exclusively as a dependency management tool (as explained here), and use something else (like flit) to build and package your project.

Not to say that PDM can't do those things either. As of version 2.0, PDM also supports publishing directly to PyPI, but that's a story for another day.

Hopefully that gives you an overview of how you could use PDM as a dependency manager. Also, it goes without saying, if you already have a system that works for you, there's no need to change your tools and workflow.

But if you have the time to try something new, or you're looking for a streamlined solution for dependency management, this is definitely worth a look.

Quick Links

I referenced a lot of different sources for this, so I wanted to consolidate that wealth of info in one place.

- PDM

- What's New In PDM 2.0? by Frost Ming

- PEP 517 - A build-system independent format for source trees

- PEP 582 - Python local packages directory

- PEP 621 - Storing project metadata in pyproject.toml

- What the heck is pyproject.toml? by Brett Cannon

- The Big List of Python Packaging and Distribution Tools by Chad Smith